1. Introduction

2. Flaws in the standard model

3. Symmetry delusions and unification

4. Strings and branes: mathematical fantasies

5. Quantum weirdness – quantum claptrap

6. Alternative models and the ether

1. It is essential to distinguish between experimental and observational data on the one hand and the interpretation of those data on the other. Data are often open to more than one interpretation. Any interpretation is based on certain assumptions, which may be unverified or even unverifiable.

2. A model of reality is not the same as reality itself; a map is not the territory. A model or theory is always a simplification, an approximation; it may have a degree of validity and/or utility without being literally true. Even if the equations associated with a particular theory or model allow accurate calculations of real events, this is no guarantee that any particular physical interpretation of those equations corresponds to mechanisms in the real world.

3. Mathematical abstractions – e.g. zero-dimensional particles, one-dimensional strings, two-dimensional spacetime ribbon-tape, and curved spacetime – are not concrete realities. Such concepts may, or may not, be useful in certain contexts, but they have no concrete existence outside the human imagination; they cannot directly influence the material world and explain nothing. The inability of many scientists to make this distinction is a root cause of many of the absurd theories, or ‘mazes of unrealities’, that are passed off as ‘science’.1

4. In an infinite, eternal universe there can be no ultimate explanations of natural phenomena. But if we want to find the direct causes of events, we have to look to real substances, energies, forces and entities, whether physical or nonphysical. A host of phenomena, and even the very existence of physical matter and force, point to the existence of deeper, subtler levels of reality. As far as physics is concerned, this means thinking in terms of a dynamic, energetic ether.

Reference

1. See G. de Purucker, Fundamentals of the Esoteric Philosophy, Pasadena, CA: Theosophical University Press (TUP), 2nd ed., 1979, p. 476; A.L. Conger (ed.), The Dialogues of G. de Purucker, TUP, 1948, 3:324.

By the end of the 19th century, scientists had discovered nearly all 92 naturally occurring

chemical elements; each was understood to consist of its own unique kind of atom. The belief that

the atom was indivisible was undermined by the discovery of X-rays in 1895 and radioactivity in 1896,

and was shattered in 1897 with the discovery of the electron, the first subatomic

particle to be identified. This was followed by the discovery of the proton in 1911 and the neutron in

1932, the two particles that are thought to make up the atomic nucleus. In the decades that followed,

subatomic particles began to multiply like rabbits.

Some were found in the cosmic rays constantly bombarding earth from space, but most were generated in particle accelerators, in which particles are smashed together at velocities approaching the speed of light, unleashing tremendous energies. The resulting data can be plotted on a graph showing the number of scattered particles at different collision energies. At certain energies the number shoots up. These peaks are called �resonances�, and are usually interpreted as short-lived, highly unstable �particles�, which decay almost instantly into other particles. Over 200 resonance particles have been detected.

To try and inject some order into the ‘particle zoo’, the Standard Model of particle physics was developed.1 According to the latest version of this model, there are 12 fundamental particles of matter (known as fermions): six leptons (which include the electron) and six quarks. Each type of lepton or quark is called a ‘flavour’. In addition, there is an antimatter particle corresponding to every fundamental matter particle, with the same properties except opposite charge. Since free quarks have proved impossible to detect, it is theorized that they can exist only in composite particles known as hadrons (this is called �confinement�). Hadrons are divided into mesons (consisting of quark-antiquark pairs), and baryons (consisting of three quarks), such as the neutron and proton. Since each quark is said to exist in one of three ‘colours’ (red, green or blue), the total number of elementary fermions comes to 48.

The idea of quark �colours� (a type of charge associated with the strong nuclear force) was invented to prevent them from violating the Pauli exclusion principle, which states that two identical fermions cannot occupy the exact same quantum state. For example, in a proton the two up-quarks possess the same spin, flavour and spatial states, so an additional property � �colour� � had to be invented, which is different for each quark. It was also decided that all baryons and mesons must be �colour-neutral� or �white�. This means that protons and neutrons must consist of one red, one green and one blue quark, while mesons must consist of one red or green or blue quark and one anti-red or anti-green or anti-blue antiquark.

The 12 basic fermions are grouped into three generations of successively more massive particles. Their masses range from less than 0.00002 electron mass units for the electron neutrino to over 338,000 for the top quark. Since individual quarks are undetectable, quark masses are largely a theoretical construct.

Table 1 Fermions

Leptons (mass in billions of electron-volts, GeV) |

Quarks (mass in GeV) |

|||

Generation 1 |

Electron |

Electron neutrino |

Up |

Down |

Generation 2 |

Muon |

Muon neutrino |

Charm |

Strange |

Generation 3 |

Tau |

Tau neutrino |

Top |

Bottom |

Modern physics recognizes four fundamental forces or interactions: gravity, electromagnetism, and the weak and strong nuclear forces. Matter particles are said to carry charges which make them susceptible to these forces. The electron, muon and tau each carry an electric charge of -1, and participate in electromagnetic interactions. Neutrinos are said to be electrically neutral. The up, charm and top quarks are said to carry an electric charge of +2/3, while the down, strange and bottom quarks carry an electric charge of -1/3. The neutron (said to consist of one up-quark and two down-quarks) is electrically neutral, while the proton (said to consist of two up-quarks and one down-quark) carries a charge of +1. The three quark ‘colours’ are considered to be an abstract kind of ‘charge’ that enables quarks to participate in strong nuclear interactions. The ‘flavour’ charges of quarks and leptons enable them to interact via the weak nuclear force and change from one to another.

Quantum field theory asserts that each of the four types of force field is quantized. In other

words, the electromagnetic and weak and strong nuclear interactions between matter particles

allegedly arise from the exchange of force-carrier particles (known as gauge bosons), which are said to be

‘virtual’ particles, constantly flickering into and out of existence. 12 gauge bosons are

recognized:

• the massless photon mediates the electromagnetic force;

• the W+, W- and Z0

bosons mediate weak nuclear interactions, causing certain decay processes; the W bosons have masses of 80.4 GeV and the Z boson has a mass of 91.2

GeV, and they have a mean life of about three tenths of a trillionth of a trillionth of a second (3 x 10-25 s), but there is no proof that they are in fact bosons;

• eight massless gluons mediate strong nuclear interactions between quarks; these particles are entirely hypothetical.

Fermions and bosons are assigned an abstract angular-momentum property known as ‘spin’. This has nothing to do with rotation, because these particles allegedly have no physical size and therefore cannot spin. Fermions are said to have half-integer spin (spin-1/2). Bosons are said to have integer spin; the gauge bosons mentioned above have spin-1. This is interpreted to mean that they do not obey the Pauli exclusion principle, meaning that more than one can exist in the same place at the same time (a nonsensical idea), producing a stronger force.

The standard model does not include an explanation of gravity. Most scientists believe that this force is best described by general relativity theory, which claims that gravity is not a force that propagates across space but results from masses distorting the ‘fabric of spacetime’ in their vicinity in some mysterious way. Since ‘curved spacetime’ is a geometrical abstraction, relativity theory is merely a mathematical model and does not provide a realistic understanding of gravity. There are alternative, more rational explanations for all the experiments cited in support of special and general relativity.2 Quantum gravity theories, which go beyond the standard model, postulate that gravity is mediated by a massless, spin-2 graviton, which has not been detected.

Table 2 Fundamental forces

| Force | Relative strength* | Range | Operates among |

| Gravity | 1 | infinite – attractive | all objects in the universe |

| Electromagnetism | 1036 | infinite – attractive/repulsive | charged particles |

| Weak nuclear | 1025 | 10-18 m – attractive/repulsive | elementary particles |

| Strong nuclear | 1038 | 10-15 m – attractive/repulsive | nucleons (protons/neutrons) |

| *Approximate; the exact strengths depend on the particles and energies involved. | |||

Fig. 2.1 CERN�s Large Hadron Collider is located in

a 27-km-long tunnel near Geneva. It was completed in 2008,

cost

$10 billion and employs 30,000 people. There are now plans for a 91-km-long Future Circular Collider, costing $38 billion.

Fig. 2.2 The ATLAS particle detector at the Large Hadron Collider.

The standard model predicts another elementary particle, known as the Higgs boson, bringing the total number of elementary fermions and bosons to 61. Sometimes dubbed the ‘god particle’, the Higgs boson is said to be an excitation of the hypothetical Higgs energy field, which supposedly arose from a spontaneous �electroweak� phase transition a fraction of a nanosecond after the big bang. The Higgs field is described as a scalar field: it has a constant magnitude of 246 GeV throughout space, and does not exert a force in any particular direction. Elementary particles (including the Higgs boson) are said to acquire mass by continuously interacting with this field. The Higgs mechanism supposedly involves spontaneous breaking of the SU(2) gauge symmetry – one of the abstract mathematical symmetries theorists have dreamt up to classify and ‘understand’ fundamental forces and particles.

The Higgs particle is said to have no spin, electric charge or colour charge, and to be so unstable that it decays into other particles in less than a billionth of a trillionth of a second. It cannot therefore be detected directly but only indirectly, by finding its alleged decay products in collider experiments. In July 2012, the CMS and ATLAS experimental teams at the Large Hadron Collider announced that they had each detected a spike in their data which they interpreted to be a very heavy particle with a mass of 125.3 and 126.5 GeV respectively. It was said to be ‘consistent with’ the Higgs boson, though there were certain discrepancies with predictions. The physicists claimed they were at least 99.977% certain it was the Higgs boson.

An analysis published in 2014 concluded that, while a new particle may have been found, there was no conclusive evidence that it was the Higgs particle. However, the European Organization for Nuclear Research (CERN) now claims to be 99.9997% certain that the Higgs boson has been found, while leaving open the possibility of there being multiple types of Higgs boson.3

Some scientists view the field of high-energy physics as a sick joke. One reason is the huge levels of funding it devours while delivering fewer and fewer new, practical insights. Another reason is its dubious and opaque data selection, processing and interpretation practices. Collider experiments produce more data than could ever be stored or analyzed. In the Large Hadron Collider around 1 billion proton-proton collisions take place every second. Over 99.99% of the data is discarded, and supercomputers then sift through the remaining data (focusing on high-energy interactions), looking for the patterns that theorists have decided are important based on their current beliefs.

The physicists try to separate the rare signals they are looking for – e.g. two photons produced by the decay of a Higgs particle – from the overwhelming �background noise� produced by the millions of proton collisions. But almost every particle-antiparticle pair decays into two photons, and there is no way of knowing for certain whether one of the 1012 photon pairs produced originated from a �Higgs�. The complicated analysis of the experimental data, involving millions of lines of impenetrable computer code and all sorts of theoretical assumptions, is bound to give rise to errors and artifacts.4 There is also plenty of scope for �creative� fudging of the data. As Miles Mathis says, �if you apply enough filters and boosters and focusers and dampers to a data set, you can find just about anything you want�. The huge funds flowing into high-energy physics help to sustain the prevailing herd mentality.

The physicists concerned specialize in inventing new theoretical particles, with each new particle requiring an even bigger and more powerful particle accelerator to detect. The existence of the bottom quark was allegedly confirmed in 1977, W and Z bosons in 1983, and the top quark in 1995. If an energy blip more or less matches the predictions, this is hailed as a success. If the predictions are not confirmed, this is also welcomed because it allows the model to be improved by adjusting it to fit the data. In other words, the standard model can never be falsified. A very embarrassing feature is that it has 26 free parameters, including all particle masses and the strengths of the interactions. The model is unable to predict any of these values, so they have to be plugged in by hand, based on experimental evidence.

Plasma physicist Eric Lerner says that although the standard model has made valid predictions within broad limits of accuracy,

it has no practical application beyond justifying the construction of ever-larger particle accelerators. Just as electromagnetism and quantum theory successfully predict the properties of atoms, one might expect a useful theory of the nuclear force to predict at least some properties of nuclei. But it can’t. Nuclear physics has split with particle physics; nuclear properties are interpreted strictly in terms of empirical regularities found by studying the nuclei themselves ...5

A major shortcoming of the standard model is that leptons and quarks are pictured as structureless, zero-dimensional, infinitely small particles. But infinitesimal points are abstractions, not concrete realities with measurable properties. If the electron were infinitely small, the electromagnetic force surrounding it would have an infinitely high energy, since electrical force increases as distance decreases; the electron would therefore have an infinite mass. This is nonsense, for an electron has a mass of 9.1 x 10-28 grams, or 511 keV (thousand electron-volts).

To get round this embarrassing situation, physicists use a mathematical trick: they simply divide each positive infinity by a negative infinity and then substitute the experimentally known values. This dubious procedure – known as ‘renormalization’ – was pioneered by Richard Feynman, Julian Schwinger and Sin-Itiro Tomanaga, who were awarded the 1965 Nobel Prize in physics for their efforts. Feynman admitted that renormalization was ‘hocus pocus’, and that they had merely ‘swept the infinities under the rug’.6 Quantum field theory has invented �Faddeev-Popov ghosts� – fictitious mathematical �ghost fields�, which are included in calculations to get rid of infinities and ensure realistic, finite results.

Quantum electrodynamics (QED), which deals with electromagnetic interactions, is said to be the most accurate theory in the history of science. This is because it supposedly predicts the magnetic moment of the electron to an accuracy of one part in a trillion. In reality, the �predicted� value has been repeatedly adjusted to agree with the latest experimental value, and the ultra-complicated calculations have never been published in their entirety.7 Moreover, without the trick of renormalization, QED only produces infinite results.

Unlike mass and electric charge, many of the other properties that particles are assigned have no realistic, physical meaning. ‘Colour charge’, for example, is a purely abstract ‘property’. And quantum ‘spin’ does not refer to the classical concept of spin; spin-1/2 electrons, for example, supposedly have to be rotated through 360° twice in order to return to their original position, but this is not a rotation in real space. Theoreticians also invented the notion of ‘isospin’: they claim that if a nucleon (a particle composing an atomic nucleus) is ‘spun’ one way it becomes a proton, and if ‘spun’ the other way it becomes a neutron. This ‘spinning’, too, takes place not in the space we move in, but in an imaginary mathematical realm.

Despite sensational claims of finding all six types of quarks predicted by modern theory, single quarks have never been directly observed, and their existence is inferred from the correspondence of their expected properties with the light, heat and path generated by a violent collision in a particle accelerator. In some particle collisions, concentrated jets of particles are emitted in certain directions, and this was interpreted to mean that unobserved quarks are being hit which then emit observable particles in the direction in which they are moving.

Quark theory has been continually modified to accommodate new observations. When Murray Gell-Mann first proposed the theory in 1964, there were only three quarks (and three antiquarks). That number has since risen to 36, plus eight gluons to hold them together, and not a single one of these supposedly physical particles has ever been directly observed. Each quark is theorized to carry an electric charge measuring either one-third or two-thirds of an electron charge, but no such charges have ever been detected.

Theorists initially assumed that the spin of the proton was simply the sum of the spins of the three hypothetical constituent quarks, but experimental data was interpreted to mean that they only accounted for about a quarter of it, giving rise to the �proton spin crisis�. So they went back to their desks, juggled the numbers, and solved the crisis by including the spins of the hypothetical gluons, the spins of a hypothetical �sea� of virtual (undetectable) quark-antiquark pairs (theoretically infinite in number, and constantly popping into and out of existence), and the orbital angular momentum of all the quarks and gluons.

Quark theory predicts that protons should interact approximately 25% more frequently if their spins are aligned in the same direction (parallel) than if they are oppositely aligned (antiparallel). But instead, colliding protons interact up to five times more frequently if their spins are parallel aligned – over an order of magnitude greater than predicted. What’s more, they also deflect nearly three times more frequently to the left than to the right. These experiments imply that the spin is carried by the proton itself, not by hypothetical quarks. Lerner suggests that protons are better modelled as some form of vortex. Plasma vortices (plasmoids), for example, interact far more strongly when they are spinning in the same direction.8

The standard model claims that matter particles were originally massless, even though mass is surely an intrinsic property of matter. As already mentioned, particles (including the Higgs boson) supposedly acquire mass by continuously interacting with the Higgs field; the stronger the interaction, the greater the mass. The strength of the interaction is said to be determined by each particle�s �Yukawa coupling constant�, a constant which is assumed to be proportional to the particle�s mass and whose origin is admitted to be a mystery. The interaction is said to involve a particle�s quantum field becoming �mathematically coupled� to the Higgs field. The ridiculous Higgs mechanism was initially invented to make electroweak theory (which ‘unifies’ electromagnetism and the weak force) renormalizable.

A particle with mass exhibits the unexplained property of inertia, meaning that it tends to resist acceleration. Most scientists assume, without any compelling evidence, that accelerated particles radiate energy. Harold Aspden, by contrast, argues that accelerated particles try to conserve their energy – thereby giving rise to inertia.9

The virtual particles (bosons) invoked to account for the four fundamental forces lack any experimental support. The particles emitting or being struck by them would experience a mutually repulsive force, and there is no explanation of how boson impacts could produce an attractive force. Strictly speaking, since force-carrier particles, like fundamental matter particles, are regarded as infinitely small, zero-dimensional point particles, they are no more than mathematical fictions and are therefore incapable of imparting any force at all. Bosons are also called �messenger particles�, because they supposedly �tell� other particles what to do rather than exerting an actual force.

The weak nuclear force is a very curious type of ‘force’, and is 11 orders of magnitude weaker than the electromagnetic force. An electron supposedly turns into an electron neutrino by emitting a W boson, and a down quark can supposedly turn into an up quark by emitting a W boson, thereby turning a proton into a neutron. These changes of flavour make possible both radioactive decay and hydrogen fusion. So flipping one quark to another quark flavour allegedly involves the emission of a W particle with a mass around 23,300 times greater than that of the quarks. As Miles Mathis says, �That is like using an atomic bomb to flip your mattress.’ In addition, an infinitesimal neutrino can supposedly �bounce� off an infinitesimal electron or quark by exchanging an infinitesimal Z boson whose mass is greater than that of an iron atom.

Energy events have been measured in particle accelerators that are interpreted as evidence of W and Z bosons, but Harold Aspden’s model of ether physics can explain these events in terms of a far simpler and more intelligible model of what is happening at the subquantum level; the energy thresholds at which short-lived particles are created are determined by the ether structure. Aspden dismisses electroweak theory as ‘a jungle of nonsense’ cloaked in multiple layers of equations.10 His own model provides a far more accurate estimate of the mass of a muon than does electroweak theory.11

The strong nuclear force between neutrons and protons is also very peculiar. Up to a distance of around 10-15 m, it is very strongly repulsive, keeping nucleons apart. Then, for unknown reasons, it abruptly becomes very strongly attractive, before dropping off very rapidly. Current theory claims that this somehow results from the inter-quark gluon force ‘leaking’ out of the nucleon. Obviously if quarks don’t exist no force is required to hold them together. As for the force holding protons and neutrons together, some alternative theories argue that there are no neutrons in the atomic nucleus, only positive and negative charges held together by ordinary electrostatic forces.12

There are serious problems with the theory that ‘virtual’ particles are continually appearing from nowhere and then disappearing so fast as to be unobservable. Each such event violates the law of the conservation of energy, but physicists turn a blind eye to this as it lasts for only a fraction of second, which is allegedly allowed by the Heisenberg ‘uncertainty principle’. For instance, the enormous mass of newly created W and Z bosons is made possible by the fact that they disappear into nothingness again after just 10-25 seconds. However, at any given moment there are unlimited numbers of virtual particles, so no matter how briefly each one exists, this amounts to a permanent loan of infinite energy. Moreover, according to general relativity theory, all this energy should curve the universe into a little ball – which obviously doesn’t happen.

Experiment confirms that detectable electron-positron pairs surround every charged particle. Quantum theory does not explain where they come from or go to; the equations simply contain a ‘creation operator’ and an ‘annihilation operator’. Realistically, since nothing comes from nothing, there must be a subquantum energy realm not included in standard physics, out of which physical particles crystallize and back into which they can dissolve. Richard Feynman invented the notion that a positron (anti-electron) was really an electron travelling backwards in time. It is amazing that such twaddle is taken seriously, while any talk of an etheric medium is dismissed.

Another controversial issue is the status of the neutrino. Neutrinos are said to be chargeless and to pass through ordinary matter almost undisturbed at virtually the speed of light, making them extremely difficult to detect. They come in three types or flavours, and are said to be created by certain types of radioactive decay, by nuclear reactions such as those in nuclear reactors or those alleged to occur in stars, or by the bombardment of atoms by cosmic rays. Most neutrinos passing through the earth are said to come from the sun; around 100 trillion solar electron neutrinos are said to pass through the human body every second, and it would take approximately one light year of lead to block half of them.

For decades, detectors have only observed about a third of the predicted number of solar neutrinos. This problem was ‘solved’ by assuming that neutrinos have a minuscule mass and can change flavour. For some reason, two-thirds of electron neutrinos emitted by the sun supposedly change into muon or tau neutrinos, which go undetected. The muon neutrino is said to be 21,250 times heavier than the electron neutrino, and the tau neutrino is said to be 106 times heavier than the muon neutrino: how these three particles with such different masses can change into each other is not explained.

The neutrino was first postulated in 1930 when it was found that, from the standpoint of relativity theory, beta decay (the decay of a neutron into a proton and an electron) seemed to violate the conservation of energy. Wolfgang Pauli saved the day by inventing the neutrino, a particle that would be emitted along with every electron and carry away energy and momentum (the emitted particle is nowadays said to be an antineutrino). W.A. Scott Murray described this as ‘an implausible ad hoc suggestion designed to make the experimental facts agree with the theory and not far removed from a confidence trick’.13 Aspden calls the neutrino ‘a figment of the imagination invented in order to make the books balance’ and says that it simply denotes ‘the capacity of the aether to absorb energy and momentum’.14 Several other scientists have also questioned whether neutrinos really exist.15

Fig. 2.3 The Super-Kamiokande neutrino observatory in Japan. Situated 2700 m below ground, it consists of a tank 42 m high and 39 m in diameter, containing 32,000 tons of ultra-pure water, viewed by some 13,000 photon detectors. It cost about $100 million to build.

Like particle-accelerator experiments, neutrino detection has developed into a huge industry, and several Nobel Prizes have been awarded to scientists working in that field. However, what such experiments actually detect is not neutrinos themselves, but either the effects of neutrinos on the energy and momentum of a target particle, or the particles into which neutrinos are believed to have changed. Cosmic rays, gamma rays and neutral particles such as the pion, kaon or neutron can mimic the desired neutrino signals, and it is questionable whether the experiments concerned have been properly shielded against them. The detection of neutrinos from the 1987 supernova is often cited as compelling evidence that neutrinos exist. But if genuine neutrinos were detected from that supernova, we ought to detect thousands of neutrino events from the sun every day, whereas only a few dozen are observed.16 The unrepeatable results obtained by neutrino detection experiments, the dubious data manipulation practices, and the frequent failure to publish all the raw data have come in for strong criticism.17

The electron and proton are both stable particles, the proton being 1836 times more massive than the electron. Their antiparticles (the positron and antiproton) are also stable. These four particles are arguably the only four stable particles that are of any importance. As regards neutrons, they have never been directly observed within the atomic nucleus, but because they sometimes appear as a decay product, it is assumed that they exist there. It is further assumed that within the nucleus they are stable, whereas outside the nucleus they are observed to decay into a proton and electron (and an ‘antineutrino’) in about 15 minutes. Quark theory has no explanation for this. Moreover, it is illogical to suppose that a neutron decays into a proton and electron, given that both the neutron and proton are supposed to consist of three quarks, while the electron is said to be an elementary particle containing no quarks.

The decay of a neutron into a proton and electron led to the original hypothesis that a neutron is in fact a bound state of one proton and one electron. This hypothesis was subsequently abandoned because it seemed unable to explain certain neutron properties, though some scientists have argued that these difficulties can be overcome.18 Aspden contends that an atomic nucleus might shed protons and negative beta particles (electrons) in a highly energetic paired relationship, which has been mistaken for an unstable ‘neutron’. He also contends that, besides etheric charges, atomic nuclei contain only protons, antiprotons, electrons and positrons. The ‘neutron’ might really be an antiproton enveloped by a lepton field, including a positron – a theory which can account for the precise lifetime, mass and magnetic moment of the neutron.19

In conclusion, it is highly likely that most of the standard model of particle physics will eventually be discarded as messy, overcomplicated, mathematicized garbage. As the inventor Nikola Tesla once said: �Today�s scientists have substituted mathematics for experiments and they wander off through equation after equation and eventually build a structure which has no relation to reality.�20

References

1. en.wikipedia.org/wiki/Standard_Model; en.wikipedia.org/wiki/Fundamental_interactions.

2. See Space, time and relativity, davidpratt.info.

3. en.wikipedia.org/wiki/Higgs_boson; David Dilworth, ‘Did CERN find a Higgs?’, July 2012, cosmologyscience.com; Chuck Bednar, ‘God particle findings were inconclusive, according to new analysis’, 7 Nov. 2014, redorbit.com; en.wikipedia.org/wiki/Search_for_the_Higgs_boson.

4. Alexander Unzicker, The Higgs Fake: How particle physicists fooled the Nobel Committee, alexander-unzicker.com, 2013, ch. 4; Alexander Unzicker and Sheilla Jones, Bankrupting Physics: How today’s top scientists are gambling away their credibility, New York: Macmillan, 2013, ch. 13.

5. Eric J. Lerner, The Big Bang Never Happened, New York: Vintage, 1992, pp. 346-7.

6. Quoted in D.L. Hotson, ‘Dirac’s equation and the sea of negative energy’, part 1, Infinite Energy, v. 43, 2002, pp. 43-62 (p. 45).

7. Oliver Consa, Something is wrong in the state of QED, October 2021, arxiv.org.

8. The Big Bang Never Happened, pp. 347-8; Paul LaViolette, Genesis of the Cosmos: The ancient science of continuous creation, Rochester, VE: Bear and Company, 2004, p. 311.

9. Harold Aspden, Cosmic mud or cosmic muddle?, 2000, haroldaspden.org; Harold Aspden, Creation: The physical truth, Brighton: Book Guild, 2006, pp. 90-2.

10. Harold Aspden, What is a ‘supergraviton’?, 1997; Photons, bosons and the Weinberg angle, 1997; Why Higgs?, 2000, haroldaspden.org.

11. Harold Aspden, Aether Science Papers, Southampton: Sabberton Publications, 1996, p. 58.

12. Aspden, Creation, pp. 39-40, 116.

13. W.A. Scott Murray, ‘A heretic’s guide to modern physics: haziness and its applications’, Wireless World, April 1983, pp. 60-2.

14. Harold Aspden, What is a neutrino?, 1998, haroldaspden.org; Creation, pp. 179-81.

15. Miles Mathis, The solar neutrino problem, 2013, milesmathis.com; Ricardo L. Carezani, Storm in Physics: Autodynamics, Society for the Advancement of Autodynamics, 2005, chs. 4, 13; Society for the Advancement of Autodynamics, autodynamicsuk.org, autodynamics.org; Paulo N. Correa and Alexandra N. Correa, To be done with the judgement of anorgonomy, 2001, What is dark energy?, 2004, aetherometry.com; David L. Bergman, Fine-structure properties of the electron, proton and neutron, 2006, commonsensescience.org; Erich Bagge, World and Antiworld as Physical Reality: Spherical shell elementary particles, Frankfurt am Main: Haag + Herchen, 1994, pp. 109-42.

16. Ricardo L. Carezani, SN 1987 A and the neutrino, autodynamicsuk.org, autodynamics.org.

17. Neutrinos at Fermi Lab, autodynamicsuk.org; Super-Kamiokande: super-proof for neutrino non-existence, autodynamicsuk.org.

18. Bergman, Fine-structure properties of the electron, proton and neutron, commonsensescience.org; Quantum AetherDynamics Institute, Neutron, 16pi2.com; Bagge, World and Antiworld as Physical Reality, pp. 262-70; R.M. Santilli, Ethical Probe on Einstein’s Followers in the U.S.A., Newtonville, MA: Alpha Publishing, 1984, pp. 114-8.

19. Creation, pp. 148-9; Harold Aspden, ‘The theoretical nature of the neutron and the deuteron’, Hadronic Journal, v. 9, 1986, pp. 129-36.

20. Nikola Tesla, �Radio power will revolutionize the world�, Modern Mechanix and Inventions, July 1934.

For most physicists, unification means symmetry. At higher and higher energies, matter and

force particles begin to merge together, resulting in more and more symmetry. Perfect symmetry

supposedly existed just after the big bang, the mythical event when, according to some scientists, all existence – all matter

and energy, and even space and time – exploded into being out of nothing and space began to expand. The age of

perfect symmetry (known as the Planck Epoch) is believed to have lasted for just one ten-million-trillion-trillion-trillionth of a

second (10-43 s). But as the universe expanded and cooled, a series of spontaneous

symmetry-breaking events allegedly occurred as a result of phase transitions.

Initially, the four fundamental forces were unified in a single superforce of perfect symmetry. At 10-43 seconds, gravity separated from the other three forces. At 10-36 seconds, the strong nuclear force separated from the electroweak force, triggering a brief instant of exponential expansion called cosmic inflation, during which space supposedly expanded 300 trillion trillion times faster than light for one hundred-billion-trillion-trillionth of a second. Finally, at 10-12 seconds, the electroweak force split into the electromagnetic and weak nuclear forces. The energy released when inflation ended created a dense, superhot plasma of quarks and gluons, which later combined to form protons and neutrons. These then combined to form atomic nuclei, and finally, 380,000 years after the big bang, nuclei and electrons began to join together to form atoms. Such are the latest fantasies of official science.

Each year around $3 billion is spent on high-energy physics worldwide. The aim is to build ever bigger and more powerful particle accelerators in order to recreate the more unified and symmetrical state of affairs that supposedly existed just after the big bang. This could be called the sledgehammer approach to unification: smash things together violently enough and they’ll merge into one. There is little doubt that an unlimited number of further short-lived ‘resonances’ will be found as the velocity of particle collisions is increased. But there is no reason to think that they have any great relevance to understanding the structure of the material world. There is not a single realistic mainstream theory of what electrons and protons (and their antiparticles) actually are, and scientists are unlikely to become any wiser by studying the debris produced by smashing these particles together at ultra-high energies.

Fig. 3.1 The DELPHI detector at the Large Electron-Positron Collider (LEP),

CERN. The LEP was

dismantled

in 2001 to make way for the Large Hadron Collider, which reused the same tunnel.

Fig. 3.2 Particle tracks (trails of tiny bubbles) in an old-style bubble chamber. An antiproton enters

from

the bottom, collides with a proton (at rest), and ‘annihilates’, giving rise to

eight pions. (particleadventure.org)

The road to unification began in the 19th century. A unified understanding of electricity and magnetism was supposedly achieved by James Clerk Maxwell, who published his equations on the subject in 1864. Although this is what the textbooks assert, many scientists have shown that Maxwell’s equations do not provide a proper understanding of electric and magnetic forces. James Wesley writes: ‘the Maxwell theory fails many experimental tests and has only a limited range of validity ... The fanatical belief in the validity of Maxwell’s theory for all situations, like the fanatical belief in “special relativity”, which is purported to be confirmed by the Maxwell theory, continues to hamper the progress in physics.’1 Pioneering scientists and inventors Paulo and Alexandra Correa argue that Maxwell’s fundamental errors included the wrong dimensional units for magnetic and electric fields and for current – ‘two epochal errors now reproduced for over a century, and which have done much to arrest the development of field theory’.2

According to Newton’s third law, every action causes an equal and opposite reaction. But out-of-balance electrodynamic forces have been observed in various experimental settings, e.g. anomalous cathode reaction forces in electric discharge tubes, and anomalous acceleration of ions by electrons in plasmas. To explain this, some scientists argue that the modern relativistic Lorentz force law should be replaced by the older Ampère force law.3 But this law, too, is inadequate. Both Lorentz and Ampère assumed that interacting electrical circuits cannot exchange energy with the local ‘vacuum’ medium (i.e. the ether).

In 1969 Harold Aspden published an alternative law of electrodynamics, which can explain all the experimental evidence: it modifies Maxwell’s third law of electrodynamics to take account of the different masses of the charge carriers involved (e.g. ions and electrons), and allows energy to be transferred to and from the surrounding ether. Action and reaction only balance, says Aspden, if the ether is taken into account.4 An additional problem is that Lorentz assumed that the field force propagates at the speed of light, while Ampère assumed instantaneous action at a distance. Clearly, to retain causality, forces must propagate at finite speeds, but the Correas argue that the field force, which is carried by etheric charges, is not restricted to the speed of light, and should not be confused with the mechanical force that two physical charges exert on one another.5

The correctness of Aspden’s law is demonstrated by the Pulsed Abnormal Glow Discharge (PAGD) reactors developed by the Correas, which produce more power than is required to run them by exciting self-sustaining oscillations in a plasma discharge in a vacuum tube. With an overall performance efficiency of 483%, the devices are clearly drawing energy from a source that does not exist for official physics; Aspden identifies the mechanism involved as ‘ether spin’.6 The US Patent Office granted three patents for the PAGD invention in 1995/96, thereby confirming that the ‘impossible’ – over-unity power generation – is in fact possible.

However, mainstream theoretical physicists are too busy theorizing to pay any attention to verifiable experimental facts and technological discoveries that upset classical electromagnetic theory. As Wilhelm Reich put it, ‘they have abandoned reality to withdraw into an ivory tower of mathematical symbols’.7 Believing that electricity and magnetism had been adequately unified, they blindly went ahead with the next step in ‘unification’: by invoking abstract symmetries (gauge theory), the electromagnetic and weak nuclear forces were supposedly shown in the 1970s to be facets of a common ‘electroweak’ force. As already mentioned, some nonmainstream researchers dismiss electroweak theory as idle mathematical speculation.

The next move was to try and unify the electroweak and strong forces into a ‘grand unified force’. According to Grand Unified Theories (or GUTs), the fusion of these two forces takes place at energies above 1014 GeV. The corresponding force-carrier particles are known as X and Y bosons, but they have not been observed as their theoretical energies are far beyond the reach of any accelerator. In big bang mythology, the Grand Unification Epoch lasted from 10-43 to 10-36 seconds after the big bang.

One testable prediction of GUTs is the slow decay of protons into pions and positrons. Without proton decay the big bang theory collapses, because all the matter and antimatter created in the first instants of time would annihilate each other and there would be no excess of protons and electrons left over to form the observable universe. The GUTs predicted that protons should have an average life of 1030 years. However, experiments failed to find any sign of proton decay. So GUT theorists went back to their blackboards, tweaked their equations, and proved that the lifetime of protons was about 1033 years – far too long to be disproved by any experiments at that time. Nowadays, its lifetime is estimated to be greater than 1034 years.

GUT models predicted the existence of extremely massive particles known as magnetic monopoles or ‘hedgehogs’ (all known magnets are dipoles, i.e. have north and south poles). None have been detected, but this is attributed primarily to cosmic inflation, which supposedly diluted their abundance to effectively zero within our observable universe. GUTs also predicted topological defects in ‘spacetime’, such as one-dimensional cosmic strings and two-dimensional domain walls. Not surprisingly, these abstract mathematical constructs have never been observed.

In order to include gravity and produce a fully unified theory of the original ‘superforce’, theorists are seeking a quantum description of gravity. As mentioned in the previous section, modelling an electron as a point particle means that the energy of the virtual photons surrounding it is infinite and the electron has an infinite mass – an embarrassment that is overcome by applying the mathematical trick of renormalization. While photons react strongly with charged particles but do not couple with each other, gravitons are believed to couple extremely feebly with matter particles but to interact strongly with each other. This means that each matter particle is surrounded by an infinitely complex web of graviton loops, with each level of looping adding a new infinity to the calculation.8 This made it necessary to divide both sides of the equation by infinity an infinite number of times.

Meanwhile, symmetry had been superseded by supersymmetry (or SUSY), which is rooted in the concept of spin. The basic idea is that matter particles (fermions) and force-carrier particles (bosons) are not really two different kinds of particles, but one. Each elementary fermion is assumed to have a boson superpartner with identical properties except for mass and spin. For each quark there is a squark, for each lepton a slepton, for each gluon a gluino, for each photon a photino, etc. In addition, for the bosonic Higgs field it is necessary to postulate a second set of Higgs fields with a second set of superpartners (Higgsinos).

A major problem is that these new particles (known as ‘sparticles’) cannot have the same masses as the particles already known, otherwise they would have already been observed; they must be so heavy that they could not have been produced by current particle accelerators. This means that supersymmetry must be a spontaneously broken symmetry, and this is said to be a disaster for the supersymmetric project as it would require a vast array of new particles and forces on top of the new ones that come from supersymmetry itself. This completely destroys the ability of the theory to predict anything. The end result is that the model has at least 105 extra undetermined parameters that were not in the standard model.9

Supersymmetry required the graviton to be accompanied by several types of gravity-carrying particles called gravitinos, each with spin-3/2. It was thought that these might somehow manage to cancel out positive infinities from graviton loops. But the infinity cancellations were found to fail when many loops were involved.10

Theorists seeking a geometrical explanation for all the forces of nature, rather than gravity alone, found that at least 10 dimensions of space and one of time were required, making 11 in all. The fact that the most economical description of ‘supergravity’ (the combined effect of gravitons and gravitinos) – called N = 8 – also required 11 dimensions gave the theory a boost. In 1980 Stephen Hawking declared that there was a 50-50 chance of a ‘theory of everything’ being achieved by the year 2000, and N = 8 was his candidate for such a theory. But within just four years this theory had gone out of fashion.11 As well as being plagued with infinities, it required space and time together to possess an even number of dimensions to accommodate spinning particles, but 11 – even for advanced mathematicians – is an odd number.12

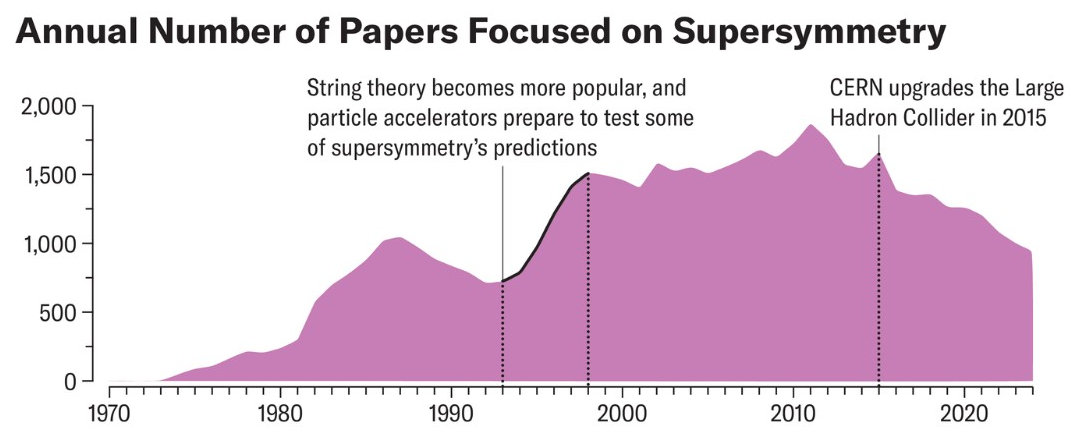

Fig. 3.3

According to Nobel laureate Sheldon Glashow, one of the architects of the standard model, �Physicists tend to be faddish, following trends. At times, belief in supersymmetry seemed almost biblical.� SUSY was a huge industry from the 1990s until around 2015. In that year upgrades to the Large Hadron Collider nearly doubled the energy of its collisions, but analyses of the data produced still failed to find any of the particles the theory required, so enthusiasm for SUSY is now on the wane.13

References

1. James Paul Wesley, Classical Quantum Theory, Blumberg: Benjamin Wesley, 1996, pp. 250, 285-7.

2. Paulo N. Correa and Alexandra N. Correa, ‘The aetherometric approach to solving the fundamental problem of magnetism’, ABRI monograph AS2-15, in Experimental Aetherometry, vol. 2B, Concord: Akronos Publishing, 2006, pp. 1-33 (aetherometry.com).

3. Thomas E. Phipps, Old Physics for New: A worldview alternative to Einstein’s relativity theory, Montreal: Apeiron, pp. 108-14; Peter Graneau and Neal Graneau, Newton versus Einstein: How matter interacts with matter, New York: Carlton, 1993, ch. 4; Paul LaViolette, Genesis of the Cosmos: The ancient science of continuous creation, Rochester, VE: Bear and Company, 2004; pp. 229-31, 272.

4. Harold Aspden, Creation: The physical truth, Brighton: Book Guild, 2006, pp. 159-61; Harold Aspden, A problem in plasma science, 2005, aetherometry.com.

5. Paulo N. Correa and Alexandra N. Correa, A note on the law of electrodynamics, 2005, aetherometry.com.

6. Paulo N. Correa and Alexandra N. Correa, Excess energy (XS NRGTM) conversion system utilizing autogenous Pulsed Abnormal Glow Discharge (aPAGD), 2005, aetherometry.com; Harold Aspden, Power from Space: The Correa invention, Energy Science Report no. 8, Southampton: Sabberton Publications, 1996, aspden.org.

7. Wilhelm Reich, Ether, God and Devil; Cosmic Superimposition, New York: Farrar, Straus and Giroux, 1973, p. 82.

8. Paul Davies and John Gribbin, The Matter Myth, New York: Touchstone/Simon & Schuster, 1992, p. 244.

9. Peter Woit, Not Even Wrong: The failure of string theory and the continuing challenge to unify the laws of physics, London: Vintage, 2007, pp. 172-4.

10. The Matter Myth, pp. 249-50.

11. Not Even Wrong, pp. 110-2.

12. The Matter Myth, p. 253.

13. scientificamerican.com/article/supersymmetrys-long-fall-from-grace.

Another ridiculous fad is string theory, which began to become fashionable in the 1980s and is considered to provide a promising theoretical framework for unifying the four fundamental forces. It postulates that the fundamental building blocks of all

particles and fields, and even space (and time!) are one-dimensional ‘strings’. These

hypothetical objects are said to average a billion-trillion-trillionth of a centimetre (10-33 cm) in length

but to have no breadth or thickness. Strings allegedly exist in 10 dimensions of spacetime (or 26

according to an earlier version of the theory), but the reason we see only three dimensions of space

is because the others have conveniently shrivelled out of sight (or ‘compactified’) so

that they are unobservable. Strings – or ‘superstrings’, if supersymmetry is

included – can stretch, contract, wiggle, vibrate and collide, they can be open or

closed (like loops), and their various modes of vibration are said to correspond to different particles and their properties (such as charge).

Fig. 4.1 Strings supposedly have length but no breadth or thickness. A

one-dimensional entity is a pure

abstraction, and would obviously not look anything like the objects

shown in this diagram. (ft.uam.es)

Fig. 4.2 As you can see in this animation, compactifying an extra dimension is really very easy!

(mysite.wanadoo-members.co.uk)

String theorists cannot explain how strings produce the reality we live in, as the number of ways in which the alleged hidden dimensions can be curled up and configured is estimated to be 10500 (1 followed by 500 zeros). Each of these �vacuum solutions� results in different particles, forces and physical laws, and theorists do not know which one corresponds to our own universe. This is called the �string theory landscape problem�, and basically means that the theory is untestable.

(xkcd.com)

Replacing zero-dimensional point particles with one-dimensional, infinitely thin strings and conjuring up six extra ‘compactified’ dimensions of space is hardly much of an advance towards a realistic model. Yet superstring theory is widely regarded as one of the most promising candidate theories of quantum gravity, which aims to harmonize general relativity theory (which focuses on continuous fields) with quantum mechanics (which focuses on discrete quanta). However, string theory has zero experimental support; to detect individual strings would require a particle accelerator at least as big as our galaxy. Moreover, the mathematics of string theory is so complex that no one knows the exact equations, and even the approximate equations are so complicated that so far they have only been partially solved.1

In 2007 physicist Peter Woit wrote: ‘More than twenty years of intensive research by thousands of the best scientists in the world producing tens of thousands of scientific papers has not led to a single testable experimental prediction of superstring theory.’2 This is because superstring theory ‘refers not to a well-defined theory, but to unrealised hopes that one might exist. As a result, this is a “theory” that makes no predictions, not even wrong ones, and this very lack of falsifiability is what has allowed the whole subject to survive and flourish.’ He accuses string theorists of ‘groupthink’ and ‘an unwillingness to evaluate honestly the arguments for and against string theory’.3

In 1995 superstring guru Edward Witten sparked the ‘second superstring theory revolution’ by proposing that the five variants of string theory, together with 11-dimensional supergravity, were part of a deeper theory, which he dubbed M-theory. This theory postulates a universe of 11 dimensions, inhabited not only by one-dimensional strings but also by higher-dimensional ‘branes’: two-dimensional membranes, three-dimensional blobs (three-branes), and also higher-dimensional entities, up to and including nine dimensions (nine-branes). It is speculated that the fundamental components of ‘spacetime’ may be zero-branes (i.e. infinitesimal points).4

No one is sure what the ‘M’ in M-theory stands for. Supporters suggest: membrane, matrix, master, mother, mystery or magic. Others suggest it is an upside-down ‘W’, for ‘Witten’. Critics have proposed that the ‘M’ stands for: missing, murky, moronic or mud. Another suggestion is ‘masturbation’, in line with Gell-Mann’s likening of mathematics to mental masturbation. In any event, as physicist Lee Smolin says, M-theory ‘is not really a theory – it is a conjecture about a theory we would love to believe in’.5

Many brane theorists believe that our world is a three-dimensional surface or brane embedded in a higher-dimensional space, called the ‘bulk’. In some models other branes are moving through the bulk and may interact with our own. To explain the relative weakness of the gravitational force, theorists proposed that all four forces were confined to our own brane but that gravity was ‘leaking’ into the bulk by some unknown mechanism. In 1999 came another proposal: that gravity resides on a different brane than ours, separated from us by a five-dimensional spacetime in which the extra dimension is either 10-31 cm wide or perhaps infinite. In this model,

all forces and particles stick to our three-brane except gravity, which is concentrated on the other brane and is free to travel between them across spacetime, which is warped in a negative fashion called anti-De Sitter space. By the time it gets to us, gravity is weak; in the other brane it is strong, on a par with the three other forces.

The two scientists who concocted this widely discussed model said they had been ‘dead scared’ because ‘there was a distinct fear of making complete fools of ourselves’.6 It’s hard to see why they were so worried: their absurd speculations are no dafter than the rest of the brainless buffoonery that passes for brane theory.

String theory and M-theory, like standard particle physics, are wedded to the prevailing cosmological myths of the big bang and expanding space.7 Some big bangers have argued that at the moment of the big bang the entire universe – including space itself – exploded into being out of nowhere in a random quantum fluctuation. Before it started to expand, it measured just 10-33 cm across, and had infinite temperature and density. Nowadays the term �big bang’ often refers to the hot, dense state that supposedly followed the brief instant of cosmic inflation, though sometimes it also includes inflation. The universe we can currently observe through telescopes is about 93 billion light years across, but when inflation began, the observable universe was allegedly only eight hundredths of a trillionth of a trillionth of a millimetre across. Then it suddenly grew exponentially, doubling in size 95 times in a fraction of a second, until it was about 1.8 metres across, after which the rate of expansion slowed down.

The core myth of the big bang fairytale is that space is expanding. But no one has ever directly measured any expansion of space. The standard view is that space does not in fact expand within our own solar system or galaxy or within our local group of galaxies or even our own cluster of galaxies; instead, it only expands between galaxy clusters and superclusters – where, conveniently, there is no earthly chance of making any direct observations to confirm or refute it. Expanding space is simply a far-fetched interpretation of the fact that light from distant galaxies displays a spectral redshift. A far more sensible interpretation of the redshift is that light loses energy as it travels through space. In the late 1990s cosmologists ‘deduced’ that the (imaginary) expansion of space is accelerating and, to ‘explain’ this, they invented ‘dark energy’ – which somehow produces a small repulsive force or ‘cosmological constant’. Dark energy supposedly accounts for 68% of the universe’s mass-energy.

Fig. 4.3 Left: According to one theory, our universe was created by the collision of two branes and, after expanding and dying out, it will be recreated by another brane collision (science.org). Right: A more desirable form of ‘brane’ collision: two brane-theorists having their heads knocked together in an effort to make them see sense.

An alternative big bang model – known as the cyclic universe – was put forward by Paul Steinhardt and Neil Turok in 2001. They start from the view that our three-dimensional world or brane is embedded in a space with an extra spatial dimension, and is separated by a microscopic distance from a second similar brane. A weak, spring-like force (dark energy) holds the two branes together and causes them to approach one another and bounce apart at regular intervals. At present, the two branes are moving apart, causing space to expand. After a trillion years or so, the fifth dimension will begin to contract, and space will cease to expand, but will not contract. It is thought that the two branes will never actually collide but, as they get closer, they will repel each other, initiating a new big bang. Steinhardt claims that ‘unproven and exotic notions’ like extra dimensions and branes are helping to make the idea of a cyclic universe ‘more comprehensible, and perhaps even compelling as a model for our universe’.8 However, given that the model makes the elementary error of treating mathematical abstractions as concrete realties, it is merely yet another example of the arbitrary nonsense that can be dreamt up once the mathematical imagination is given free rein.

As mentioned, there are an estimated 10500 ways in which the extra dimensions of string theory and M theory can be configured. Some scientists speculate that every possible geometry does exist, but in separate �bubble universes�, which together form a multiverse. The endless creation of bubble universes is part of the �eternal inflation� theory, which claims that the exponential expansion of space continues forever in most of the universe, while stopping in some regions, each of which becomes a separate bubble, which expands from its own big bang and has its own physical features and laws, sometimes allowing the evolution of life and intelligence.

Peter Woit says that superstring theorists can be very arrogant and ‘often seem to be of the opinion that only real geniuses are able to work on the theory, and that anyone who criticises such work is most likely just too stupid and ignorant to understand it’. A rather excitable superstring enthusiast, a Harvard faculty member, said that those who criticize the funding of superstring theory are ‘terrorists’ and deserve to be eliminated by the US military.9 Paul Dirac once said: ‘It is more important to have beauty in one’s equations than to have them fit experiment.’10 Some physicists argue that the mathematics of string theory and M-theory is so beautiful that it cannot possibly be wrong. But Woit says that these theories are ‘neither beautiful nor elegant’:

The ten- and eleven-dimensional supersymmetric theories actually used are very complicated to write down precisely. The six- or seven-dimensional compactifications of these theories necessary to try to make them look like the real world are both exceedingly complex and exceedingly ugly.11

Richard Feynman bluntly dismissed string theory as ‘nonsense’.12 And another Nobel laureate, Sheldon Glashow, once commented: ‘Contemplation of superstrings may evolve into an activity ... to be conducted at schools of divinity by future equivalents of medieval theologians. ... For the first time since the dark ages we can see how our noble search may end with faith replacing science once again.’13 String enthusiast Michio Kaku has described the basic insight of string theory as ‘The mind of God is music resonating through 11-dimensional hyperspace.’ Woit comments: ‘Some physicists have joked that, at least in the United States, string theory may be able to survive by applying to the federal government for funding as a “faith-based initiative”.’14 Increasingly vocal critics within the physics community accuse M-theorists, brane-worldists and string cosmologists of dealing in mathematics rather than physics. Harvard physicist Howard Georgi characterized modern theoretical physics as ‘recreational mathematical theology’.15 String theory is far closer to being a theory of nothing than a theory of everything.

In an effort to simplify the standard model of particle physics, some physicists in the late 1970s proposed that quarks and leptons might consist of subcomponents, named ‘preons’ (also known as prequarks or subquarks); they were regarded as point particles, and according to one model only two kinds were needed. However theorists were unable to formulate a model which could account for both the small size and light weight of observed particles, and no experimental evidence turned up. Later, more exotic preon models were developed, which propose that bosons, too, are composed of preons.

One model proposed that preons are two-dimensional ‘braided ribbons’, ‘braids of spacetime’, or ‘pieces of spacetime ribbon-tape’. It was thought that this model could be linked to M-theory, but also to a theory known as loop quantum gravity. The latter theory posits that, on the Planck scale (10-33 cm), �spacetime� is not a smooth continuum but is quantized (i.e. granular), and consists of closed one-dimensional loops (or lines of force), each being a quantized excitation of the gravitational field. One of its alleged advantages is that, unlike string theory, it is ‘background-independent’, meaning that it does not assume any fixed geometry and properties of space and time; the number of spatial dimensions could even change from one moment to the next.16 Such theoretical fantasies are just as untestable as string theory.

Fig. 4.4 This diagram represents a ‘big bounce’. According to loop quantum gravity, the universe expands, crunches and bounces from one classical region of spacetime to another via a quantum bridge (science.psu.edu). It sounds like another very promising concept – for science fiction writers!

Whatever the ultimate fate of string theory and M-theory, the modern scientific obsession with abstract topologies, geometries, symmetries and extra dimensions looks set to continue for some time to come. But there is no evidence that the dimensions invented by modern scientists are anything other than mathematical fictions. The standpoint expressed by H.P. Blavatsky therefore still holds: ‘popular common sense justly rebels against the idea that under any condition of things there can be more than three of such dimensions as length, breadth, and thickness.’17 Theosophy postulates endless interpenetrating worlds or planes composed of different grades of energy-substance, with only our own immediate world being within our range of perception. But other planes are not extra ‘dimensions’; on the contrary, objects and entities on any plane are extended in three dimensions – no more and no less.18

Notes and references

1. Brian Greene, The Elegant Universe: Superstrings, hidden dimensions, and the quest for the ultimate theory, London: Vintage, 2000, p. 19.

2. Peter Woit, Not Even Wrong: The failure of string theory and the continuing challenge to unify the laws of physics, London: Vintage, 2007, p. 208.

3. Not Even Wrong, pp. 6, 9.

4. The Elegant Universe, pp. 287-8, 379.

5. Lee Smolin, The Trouble with Physics: The rise of string theory, the fall of a science and what comes next, London: Allen Lane, 2006, p. 147.

6. Marguerite Holloway, ‘The beauty of branes’, 26 September 2005, sciam.com.

7. Trends in cosmology: beyond the big bang, davidpratt.info.

8. seedmagazine.com/news/2007/07/a_cyclic_universe.php; P.J. Steinhardt and N. Turok, ‘The cyclic model simplified’, New Astronomy Reviews, v. 49, nos. 2-6, 2005, pp. 43-57.

9. Not Even Wrong, pp. 206-7, 227.

10. Quoted in William C. Mitchell, Bye Bye Big Bang, Hello Reality, Carson City, NV: Cosmic Sense Books, 2002, p. 389.

11. Not Even Wrong, p. 265.

12. Quoted in Not Even Wrong, p. 180.

13. Quoted in Eric J. Lerner, The Big Bang Never Happened, New York: Vintage, 1992, p. 358.

14. Not Even Wrong, p. 216.

15. Quoted in Richard L. Thompson, God and Science: Divine causation and the laws of nature, Alachua, FL: Govardhan Hill Publishing, 2004, p. 52.

16. The Trouble with Physics, pp. 73-4, 82, 249-54.

17. H.P. Blavatsky, The Secret Doctrine, TUP, 1977 (1888), 1:252; G. de Purucker, Esoteric Teachings, San Diego, CA: Point Loma Publications, 1987, 3:30-1.

18. One writer (Sunrise, Dec. 1995/Jan. 1996) has claimed that the extra dimensions proposed by string theory are ‘foreshadowed’ by H.P. Blavatsky’s statement in 1888 that ‘six is the representation of the six dimensions of all bodies: ...’ (The Secret Doctrine, 2:591). But note how the quotation continues: ‘... the six lines which compose their form, namely, the four lines extending to the four cardinal points, North, South, East, and West, and the two lines of height and thickness that answer to the Zenith and the Nadir.’ In other words, Blavatsky is simply referring to the ordinary three dimensions of space (length, breadth and height/thickness). At any point in three-dimensional space we can construct three intersecting lines or axes that are all at right angles to one another; each line extends in two directions, so that there are six directions in total. If there were a fourth dimension of space, we would be able to add a fourth line at right angles to all the other three.

The famous ‘uncertainty principle’ formulated by Werner Heisenberg in 1927 says that it is impossible to simultaneously measure with precision both the position and momentum of a particle, or the energy and duration of an energy-releasing event; the uncertainty can never be less than Planck’s constant (h). It goes without saying that some measurement uncertainty must exist, since any measurement must involve the exchange of at least one photon of energy which disturbs the system being observed in an unpredictable way. Obviously, the fact that we don’t know the exact properties of a particle or the exact path it follows does not mean that it doesn’t follow a definite trajectory or possess any definite properties unless we are trying to observe it. But this was the interpretation proposed by Danish physicist Niels Bohr, and most physicists in the 1920s followed his lead, giving rise to the prevailing Copenhagen interpretation of quantum physics.

The conventional interpretation assumes that particles are subject to utterly random quantum fluctuations; in other words, the quantum world is believed to be characterized by absolute indeterminism and irreducible lawlessness. David Bohm, on the other hand, took the view that the abandonment of causality had been too hasty:

it is quite possible that while the quantum theory, and with it the indeterminacy principle, are valid to a very high degree of approximation in a certain domain, they both cease to have relevance in new domains below that in which the current theory is applicable. Thus, the conclusion that there is no deeper level of causally determined motion is just a piece of circular reasoning, since it will follow only if we assume beforehand that no such level exists.1

James Wesley argued that the uncertainty principle is logically and scientifically unsound, and empirically false. It applies only in certain restricted measurement situations, and does not represent a limit on the knowledge we can have about the state of a system. He gives six examples of the experimental failure of the uncertainty principle. For example, the momentum and position of an electron in a hydrogen atom are known to a precision six orders of magnitude greater than is permitted by the uncertainty principle.2 In Wesley’s causal or classical quantum theory, the uncertainty principle is superfluous.

Even Heisenberg had to admit that the uncertainty principle did not apply to retrospective measurements. As W.A. Scott Murray says:

By observing the same electron on two occasions very far apart in time and space, we can determine where that electron was at the time of the first measurement and how fast it was then moving, and we can in principle determine both those quantities after the event to any accuracy we please. ... [O]ur ability to calculate precisely the earlier position and momentum of an electron on the basis of later knowledge constitutes philosophical proof that the electron’s behaviour during the interval was determinate.3

According to the Copenhagen interpretation, microphysical particles do not obey causality as individuals, but only on average. But how can a supposedly lawless quantum realm give rise to the statistical regularities displayed by the collective behaviour of quantum systems? To assume that certain quantum events are completely noncausal just because we cannot predict them or identify the causes involved is completely unjustified. No one has ever demonstrated that any event occurred without a cause, and it is therefore reasonable to assume that causality is obeyed throughout infinite nature. H.P. Blavatsky writes: ‘It is impossible to conceive anything without a cause; the attempt to do so makes the mind a blank.’4 This implies that there must be a great many scientists walking around with blank minds!

A quantum system is represented mathematically by a wave function, which is derived from Schrödinger’s equation. The wave function can be used to calculate the probability of finding a particle at any particular point in space. When a measurement is made, the particle is of course found in only one place, but if the wave function is assumed to provide a complete description of the state of a quantum system – as it is in the Copenhagen interpretation – it would mean that in between measurements the particle dissolves into a ‘superposition of probability waves’ and is potentially present in many different places at once. Then, when the next measurement is made, this entirely hypothetical ‘wave packet’ is supposed to ‘collapse’ instantaneously, in some random and mysterious manner, into a localized particle again.

The idea that particles can turn into ‘probability waves’, which are no more than abstract mathematical constructs, and that these abstractions can ‘collapse’ into a real particle is yet another instance of physicists succumbing to a mathematical contagion – the inability to distinguish between abstractions and concrete realities.

Moreover, since the measuring device that is supposed to collapse a particle’s wave function is itself made up of subatomic particles, it seems that its own wave function would have to be collapsed by another measuring device (which might be the eye and brain of a human observer), which would in turn need to be collapsed by a further measuring device, and so on, leading to an infinite regress. In fact, the standard interpretation of quantum theory implies that all the macroscopic objects we see around us exist in an objective, unambiguous state only when they are being measured or observed. Erwin Schrödinger devised a famous thought experiment to expose the absurd implications of this interpretation. A cat is placed in a box containing a radioactive substance, so that there is a 50-50 chance of an atom decaying in one hour. If an atom decays, it triggers the release of a poison gas, which kills the cat. After one hour the cat is supposedly both dead and alive (and everything in between) until someone opens the box and instantly collapses its wave function into a dead or alive cat.

Various solutions to the ‘measurement problem’ associated with wave-function collapse have been proposed. A particularly absurd approach is the many-worlds hypothesis, which claims that the universe splits each time a measurement (or measurement-like interaction) takes place, so that all the possibilities represented by the wave function (e.g. a dead cat and a living cat) exist objectively but in different universes. Our own consciousness, too, is supposed to be constantly splitting into different selves, which inhabit these proliferating, noncommunicating worlds.

Other theorists speculate that it is consciousness that collapses the wave function and thereby creates reality. In this view, a subatomic particle does not assume definite properties when it interacts with a measuring device, but only when the reading of the measuring device is registered in the mind of an observer. According to the most extreme, anthropocentric version of this theory, only self-conscious beings such as ourselves can collapse wave functions. This means that the whole universe must have existed originally as ‘potentia’ in some transcendental realm of quantum probabilities until self-conscious beings evolved and collapsed themselves and the rest of their branch of reality into the material world, and that objects remain in a state of actuality only so long as humans are observing them.5

Some mystically minded writers have welcomed this approach as it seems to reinstate consciousness at the heart of the scientific worldview. It certainly does – but at the expense of reason, logic and common sense. According to theosophic philosophy, the ultimate reality is consciousness, or rather consciousness-life-substance, existing in infinitely varied degrees of density and in an infinite variety of forms. The physical world can be regarded as the projection or emanation of a universal mind, in the sense that it has condensed from ethereal and ultimately ‘spiritual’ grades of energy-substance, guided by patterns laid down by previous cycles of evolution. But to suggest that physical objects (e.g. the moon) don’t exist unless we humans are looking at them is just plain stupid – mystification rather than genuine mysticism. The human mind only exercises a direct and significant influence on physical objects in cases of genuine psychokinesis or ‘mind over matter’.

Wave-function collapse is described as an instantaneous event whereby a superposition of different quantum states becomes a single, definite state upon measurement. An alternative mainstream approach is �decoherence�, which is described as a gradual process whereby a particle loses its quantum �fuzziness� by interacting with its environment. But this is not much of an improvement, because it still assumes that a particle is initially in an indeterminate state and can be in several places at once, and that it is only when the environment �measures� the particle that this supposed superposition of all possible states collapses into a single, classical state – like that of the objects we see around us.

The probabilistic Copenhagen approach was strongly opposed by Albert Einstein, Max Planck and Erwin Schrödinger. In 1924 Louis de Broglie proposed that the motion of physical particles is guided by ‘pilot waves’. This idea was later further developed by David Bohm, Jean-Pierre Vigier and a number of others physicists, giving rise to an alternative, more realistic and intelligible interpretation of quantum physics.6

The Bohm-Vigier causal or ontological interpretation holds that a particle is a complex structure that is always accompanied by a pilot wave (described by the wave function), which guides its motion by exerting a quantum potential force. Particles therefore follow causal trajectories even though we cannot measure their exact motion. For Bohm the quantum potential operates from a deeper level of reality which he calls the ‘implicate order’, associated with the electromagnetic zero-point field. It is sometimes called a ‘subquantum fluid’ or ‘quantum ether’. Vigier sees it as a Dirac-type ether consisting of superfluid states of particle-antiparticle pairs. Bohm postulated that particles are not fundamental, but rather relatively constant forms produced by the incessant convergence and divergence of waves in a ‘superimplicate order’, and that there may be an endless series of such orders, each having both a matter aspect and a consciousness aspect.

One of the classic demonstrations of the supposed weirdness of the quantum realm is the double-slit experiment. The apparatus consists of a light source, a plate with two slits cut into it, and behind it a photographic plate. If both slits are open, an interference pattern builds up on the screen even when photons or other quantum particles are assumed to approach the slits one at a time. The Copenhagen interpretation is that a single particle passes in some indefinable sense through both slits simultaneously and somehow interferes with itself; this is attributed to ‘wave-particle duality’, for which it offers no further explanation. In the Bohm-Vigier approach, on the other hand, each particle passes through only one slit whereas the quantum wave passes through both, giving rise to the interference pattern. If a device is used to detect through which slit each particle travels, the interference pattern disappears. In the Copenhagen interpretation, the measurement collapses the wave function, whereas in the causal approach it affects the real pilot wave. The Copenhagen interpretation claims that any path-determining measurement will destroy the interference pattern, whereas the causal interpretation predicts that interference will persist if future techniques allow a sufficiently subtle, nondestructive measurement to be performed.

In the Bohm-Vigier model, then, the quantum world exists even when it is not being observed and measured. It rejects the positivist view that something that cannot be measured or known precisely cannot be said to exist. The probabilities calculated from the wave function indicate the chances of a particle being at different positions regardless of whether a measurement is made, whereas in the conventional interpretation they indicate the chances of a particle coming into existence at different positions when a measurement is made.